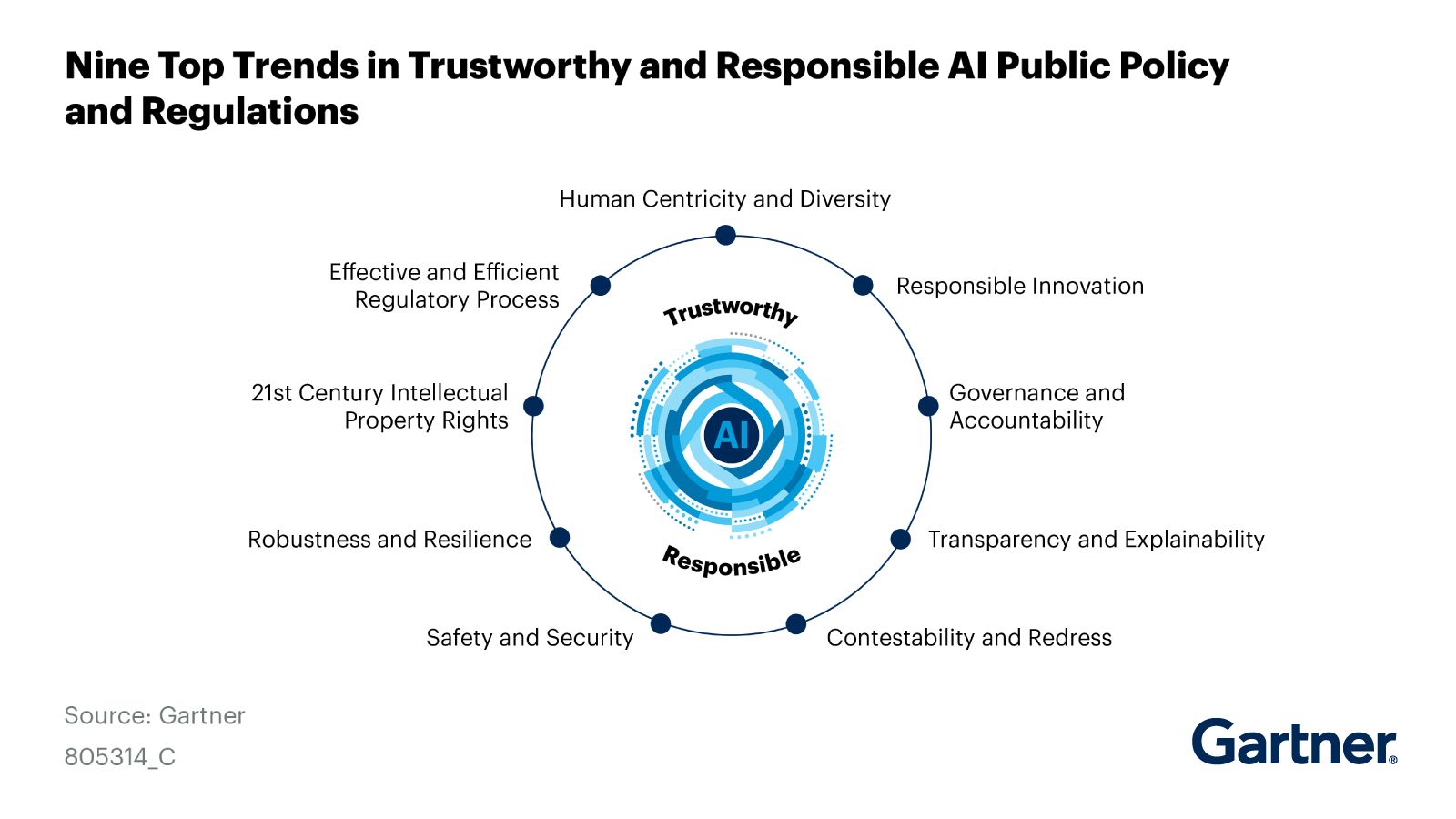

The gap between AI technology and policy and regulations is widening as AI applications continue to grow rapidly across various industries over the past 1-2 years. Experts predict that the coming year will showcase the true potential of AI. However, to ensure responsible development, policies and regulations must keep pace, addressing issues such as copyright, ethics, societal impacts, and economic considerations before AI interferes too much with human functions or undermines various ethical principles. Therefore, policies and regulations are integral to the development of AI.

As a leading Tech Enabler in Thailand, G-Able is committed to promoting the simultaneous development of technology and human potential. Today, we introduce AI policy and regulatory guidelines from Gartner, outlining key considerations for developers and decision-makers before implementing AI.

Human Centricity and Diversity

AI must work in collaboration with humans. It should not be used to cut off opportunities for people, as the initial input for AI comes from humans. Therefore, the concept of using AI in tandem with humans, such as developing Human-in-a-Loop AI or involving people in the AI training process, is crucial.

Additionally, diversity is essential to prevent bias in AI development. Supporting diversity among AI developers and ensuring diverse datasets is crucial.

Responsible Innovation

Innovations in AI must be responsible. Uncontrolled AI could pose risks to society. Therefore, it is essential to provide AI with the freedom to innovate while ensuring no negative impact on society. Effective tools like Regulatory Sandbox can reflect the impact of AI to make necessary improvements before real-world implementation.

Governance and Accountability

Governments worldwide are increasingly recognizing the importance of AI development. As AI impacts media, economic progress, and governance, countries are establishing standards for AI development with varying degrees of leniency or stringency.

Transparency and Explainability:

Transparency is crucial in the age of AI, as data is considered the “new oil.” Creating trustworthy AI systems requires reliable data. Therefore, developing transparent systems that allow scrutiny of data sources and explanations for various outcomes is essential.

Contestability and Redress:

Users should have the power over their data. Once transparency is ensured, individuals should have the right to contest or refuse the collection of their data on the internet. Users should be able to raise concerns from the data collection stage for AI training to the utilization of databases for commercial purposes.

Safety and Security

AI is expected to enhance the detection of abnormalities in system security by up to 30% in the future. However, without proper standards, AI could become a threat to cybersecurity. Therefore, using AI safely requires strict controls to ensure continuous security and immediate shutdown capabilities to address any issues promptly.

Robustness and Resilience

AI systems must adapt to new threats and resist following trends blindly. They should be resilient to new threats, such as viruses or malware, with built-in guardrails and safety breaks to prevent cascading problems. This ensures systems can be quickly brought back, updated, or fixed as needed.

21st Century Intellectual Property Rights

AI challenges traditional copyright and intellectual property laws, particularly during AI training. The law must clearly define the permissible ways to train AI and collect diverse datasets to protect the original owners’ rights, whether in art, writing, research, or other creative works.

Effective and Efficient Regulatory Process

There is a recognized gap between emerging science and policies and regulations, not just in AI but in all emerging sciences. Rapid changes make it challenging to create timely regulations. Therefore, liability and privacy laws need to be developed in tandem with AI to ensure they are current and effective.

In conclusion, as AI continues to advance, policies and regulations must evolve to promote responsible and sustainable development that benefits both people and AI in the business landscape.